2.4. 一元线性回归¶

import numpy as np

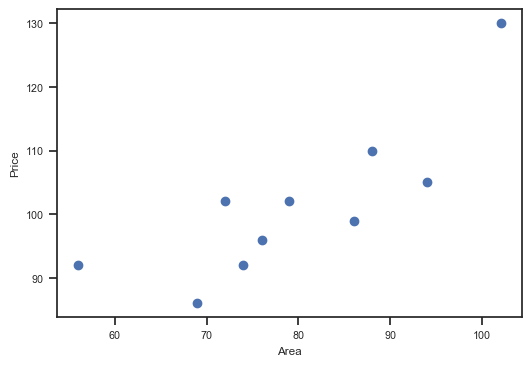

x = np.array([56, 72, 69, 88, 102, 86, 76, 79, 94, 74])

y = np.array([92, 102, 86, 110, 130, 99, 96, 102, 105, 92])import seaborn as sns

custom_params = {"figure.figsize": (6, 4),

"font.sans-serif":"Arial Unicode MS",

'axes.unicode_minus': False}

sns.set_theme(style="ticks", font_scale=0.7, rc=custom_params)from matplotlib import pyplot as plt

%matplotlib inline

plt.scatter(x, y)

plt.xlabel('Area')

plt.ylabel('Price')

正如上面所说,线性回归即通过线性方程去拟合数据点。那么,我们可以令该 1 次函数的表达式为:

2.5. 平方损失函数 (square loss)¶

正如上面所说,如果一个数据点为 (, ),那么它对应的误差 (Error) 就为:

上面的误差往往也称之为「 残差」 (Residual)。但是在机器学习中,我们更喜欢称作「 损失 」 (Loss),即真实值和预测值之间的偏离程度。那么,对 个全部数据点而言,其对应的残差损失总和就为:

更进一步,在线性回归中,我们一般使用残差的平方和来表示所有样本点的误差。公式如下:

- 残差:

- 平方损失函数:

2.6. 最小二乘法代数求解¶

最小二乘法是用于求解线性回归拟合参数 的一种常用方法。最小二乘法中的「二乘」代表平方,最小二乘也就是最小平方。而这里的平方就是指代上面的平方损失函数。

简单来讲,最小二乘法也就是求解平方损失函数最小值的方法。那么,到底该怎样求解呢?这就需要使用到高等数学中的知识。推导如下:

首先,平方损失函数为:

我们的目标是求取平方损失函数 最小时,对应的 。首先求 的 1 阶偏导数:

然后,我们令 以及 ,解得:

到目前为止,已经求出了平方损失函数最小时对应的 参数值,这也就是最佳拟合直线。

2.7. 最小二乘法矩阵求解¶

学习完上面的内容,相信你已经了解了什么是最小二乘法,以及如何使用最小二乘法进行线性回归拟合。上面,我们采用了求偏导数的方法,并通过代数求解找到了最佳拟合参数 的值。这里尝试另外一种方法,即通过矩阵的变换来计算参数 。

首先,一元线性函数的表达式为 ,表达成矩阵形式为:

即:

式中, 为 ,而 则是 矩阵。然后,平方损失函数为:

通过对公式 实施矩阵计算乘法分配律得到:

在该公式中 与 皆为相同形式的 矩阵,由此两者相乘属于线性关系,所以等价转换如下:

此时,对 矩阵求偏导数 得到:

当矩阵 满秩时, ,且 。所以有 ,并最终得到:

2.8. 线性回归 scikit-learn 实现¶

sklearn.linear_model.LinearRegression(fit_intercept=True, normalize=False, copy_X=True, n_jobs=1)

# - fit_intercept: 默认为 True,计算截距项。

# - normalize: 默认为 False,不针对数据进行标准化处理。

# - copy_X: 默认为 True,即使用数据的副本进行操作,防止影响原数据。

# - n_jobs: 计算时的作业数量。默认为 1,若为 -1 则使用全部 CPU 参与运算。from sklearn.linear_model import LinearRegression

# 定义线性回归模型

model = LinearRegression()

model.fit(x.reshape(x.shape[0], 1), y) # 训练, reshape 操作把数据处理成 fit 能接受的形状

# 得到模型拟合参数

model.intercept_, model.coef_(41.33509168550617, array([0.75458428]))model.predict([[150]])array([154.52273298])2.9. 线性回归综合案例¶

import pandas as pd

url = 'https://github.com/NishadKhudabux/Boston-House-Price-Prediction/raw/refs/heads/main/Boston.csv'

# column_names = ['CRIM', 'ZN', 'INDUS', 'CHAS', 'NOX', 'RM', 'AGE', 'DIS', 'RAD', 'TAX', 'PTRATIO', 'B', 'LSTAT']

df = pd.read_csv(url, header=0)

df.head()df.columnsIndex(['CRIM', 'ZN', 'INDUS', 'CHAS', 'NOX', 'RM', 'AGE', 'DIS', 'RAD', 'TAX',

'PTRATIO', 'LSTAT', 'MEDV'],

dtype='object')df.columns = [x.lower() for x in df.columns]

features = df[["crim", "rm", "lstat"]]

features.describe()target = df["medv"] # 目标值数据

from sklearn.model_selection import train_test_split

# 将特征和目标值分开

X = features

y = target

# 使用 train_test_split 分割数据,将 30% 的数据作为测试集

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.3, random_state=42)

# 查看数据集的形状

print(X_train.shape, y_train.shape, X_test.shape, y_test.shape)(354, 3) (354,) (152, 3) (152,)

model = LinearRegression() # 建立模型

model.fit(X_train, y_train) # 训练模型

model.coef_, model.intercept_ # 输出训练后的模型参数和截距项(array([-0.12362012, 5.09661773, -0.60817459]), -1.2405510324250422)上面的单元格中,我们输出了线性回归模型的拟合参数。也就是最终的拟合线性函数近似为:

其中,, 和 分别对应数据集中 CRIM, RM 和 LSTAT 列。接下来,向训练好的模型中输入测试集的特征得到预测值。

preds = model.predict(X_test) # 输入测试集特征进行预测

preds # 预测结果array([25.95010416, 31.04855815, 18.2497264 , 26.3019832 , 19.72042823,

23.46871069, 17.71366141, 15.49650764, 22.17984511, 20.73388426,

18.17724891, 18.93873596, -6.35437003, 23.00971662, 20.67587505,

26.66807591, 18.01857996, 3.23670389, 37.08732784, 18.10256111,

26.53443298, 27.41308736, 13.99188946, 26.47265903, 18.95326059,

14.39064862, 23.15222471, 20.49447042, 18.61054869, 19.6808132 ,

18.20651772, 27.11073871, 25.4330687 , 19.43551217, 15.84148017,

17.98728092, 32.92433015, 22.70715097, 20.73270312, 25.97803613,

13.33760731, 29.08053218, 37.95164944, 19.29216769, 26.10063642,

16.60989702, 16.51288715, 27.36660097, 19.7455285 , 29.24021979,

21.30002643, 31.4765798 , 18.52548865, 28.64078242, 34.84253501,

24.04067339, 19.66522085, 31.69779263, 25.46484613, 16.07543617,

27.42948149, 32.77789044, 29.81113896, 19.35780323, 28.9413907 ,

11.89916031, 20.25014766, 26.78687738, 29.74091303, 17.07827625,

19.61952361, 27.74870143, 12.54395385, 25.61226266, 23.79596517,

3.20619077, 22.67424043, 36.46170373, 17.33409647, 11.75192421,

23.3913465 , 10.41607184, 23.07717374, 7.37683104, 22.2962212 ,

28.08812367, 22.33078153, 27.69005761, 26.63422339, 22.73619744,

23.28376198, 6.38335834, 23.39696086, 21.1061108 , 12.01510444,

24.39925364, 23.50282292, -0.88178284, 18.92844379, 18.04521922,

21.58806596, 25.34794842, 8.78508592, 23.26431999, 24.73085877,

14.82814703, 20.99784584, 28.4225529 , 24.65135513, 27.77945214,

12.15141611, 19.86691593, 26.80930743, 24.31730346, 31.34755289,

19.45326717, 33.72874163, 17.1505415 , 20.52024491, 27.91244682,

19.63717391, 28.21563418, 7.48914676, 23.16332186, 26.64949711,

24.82176435, 28.09870799, 32.28309414, 20.54206054, 36.32384001,

10.74004071, 26.56401533, 21.60804452, 19.38688193, 7.67884687,

23.39226334, 24.46020314, 31.09393553, 29.75192884, 18.94581935,

20.26932503, 28.06686746, 22.02045463, 11.67109054, 5.16317683,

24.16233599, 20.62623892, 18.33470988, 16.17338714, 40.34645114,

21.0067563 , 19.39310502])from sklearn.metrics import mean_absolute_error, mean_squared_error

# import mean_absolute_error, mean_squared_error

mae_ = mean_absolute_error(y_test, preds)

mse_ = mean_squared_error(y_test, preds)

print("scikit-learn MAE: ", mae_)

print("scikit-learn MSE: ", mse_)scikit-learn MAE: 4.111995393754922

scikit-learn MSE: 29.975964330767464